AI isn’t a content machine. It’s a research tool. Here’s how to use it like one.

Marketing Unfiltered #79 → Are You At The Wrong Starting Point?

Good Morning leaders, how is it May already?!

If you missed last week’s article, here is my flexible framework for how to guide your brand forward.

This week we have the Mr John Lyons returning to MU, breaking down why AI is your research partner not a content machine.

Have a great weekend and we will see you this time next week.

The Wrong Starting Point

By John Lyons, Fractional CMO

Most marketers are using AI to generate output.

Blog posts, social copy, email sequences, ad creative. The tool does the work, you do the publishing, everybody looks busy.

The problem isn’t the tool. It’s the brief. Or more to the point, the complete lack of a brief.

Why? Because LLMs are predictive.

They don’t know what good looks like - in your category, for your brand, for your market. And based on the mediocre chocolate box art and video clips the tech bros swoon over, it doesn’t know what good creative looks like in any way.

They know, solely based on everything they’ve ever been fed, what’s most likely to be the case.

And here’s the bit that should make you uncomfortable as a marketer. They’ve been fed a lot of marketing content, and most of it’s total shit.

Gary Vee. Simon Sinek. Neil Patel. Scott Galloway.

All of them have been scraped, indexed, and weighted by the same models you’re relying on to do your marketing thinking for you. And of course those big, but completely misguided, voices have been echoed by their acolytes to give them and their thoughts undue authority so they’re going to be picked up as fodder by your LLM over marketers whose success has been based on knowledge and training rather than their ability to self-promote.

So, if you don’t tell your LLM what good looks like before you ask it to do anything, it’ll average everything it knows. Which means it might hand you survivorship bias dressed up as strategy.

Shit in, Shit out. Just faster. If you’re cool with diarrhoea, sweet.

The Underlying Principle

Marketing is about the market.

Not the marketer, not the brand team, not the founder who built the product and knows it inside out.

The market. The people who will or won’t give you their money, depending on whether they can see their problem being solved by whatever you are selling.

This is where AI can actually be useful, if you use it properly. Not to generate content you couldn’t be bothered to write, but to get closer to how real people think about a category, a problem, a purchase.

That’s a research job. And it turns out LLMs are bloody good at it, provided you set them up correctly and treat their output with a degree of scepticism.

The distinction matters. AI as a content vending machine is commercially dubious. AI as a research tool with a trained eye over the output is genuinely valuable.

Start With Principles, Not Prompts

Before I built HatGPT, my custom research tool, I did something most people skip entirely. I sat down and wrote out my principles of marketing. What good looks like. How I actually think about product market fit, category entry points, ICP definition, messaging. Not a list of tactics. A set of instructions about how marketing really works.

Then I used AI to help me stress-test and structure it. I asked it to tell me its assumptions, its priorities, where it had gaps. I asked it to interview me, essentially, so I could respond to questions rather than build from scratch. That process surfaces the biases the model has picked up, and gives you the chance to correct them before they contaminate everything downstream.

My first prompt in building HatGPT.

This step is the one people too often skip. They open ChatGPT, type a prompt, and wonder why the output feels generic. It’s generic because you haven’t told it anything about how you think. You’re getting the average of the internet instead of a tool trained on your actual approach.

If you want it to think like a trained marketer, you have to tell it what that means.

Where To Get The Truth From

Once you’ve got the principles in place, the next question is where the tool goes for its information. This is the bit that makes the biggest practical difference.

The instinct is to point the AI at the brand’s website and ask it to work out who the customer is. Don’t do that. The website tells you what the brand thinks about itself and who their customer is.

That’s not the same thing as what the market actually needs.

Instead, move away from the category label and look at the problems that drive people into the category in the first place.

If you’re working with a B2B print supplier, nobody wakes up thinking “I need B2B print services.” They think “we need a brochure for the trade show” or “our letterheads look embarrassing.”

Those are the category entry points. That’s where you find the real language.

Step 1 from the ICP voice research framework knowledge file, identify relevant conversations.

HatGPT is set up to go to Reddit, Quora, trade forums, and industry discussion boards, and find the most repeated questions and topics around the problem the product solves. Not what the brand says it solves. What real people are actually talking about and asking about, in their own words.

That gives you something useful, a basis for synthetic research grounded in real data, not in what the AI guesses the customer might be like.

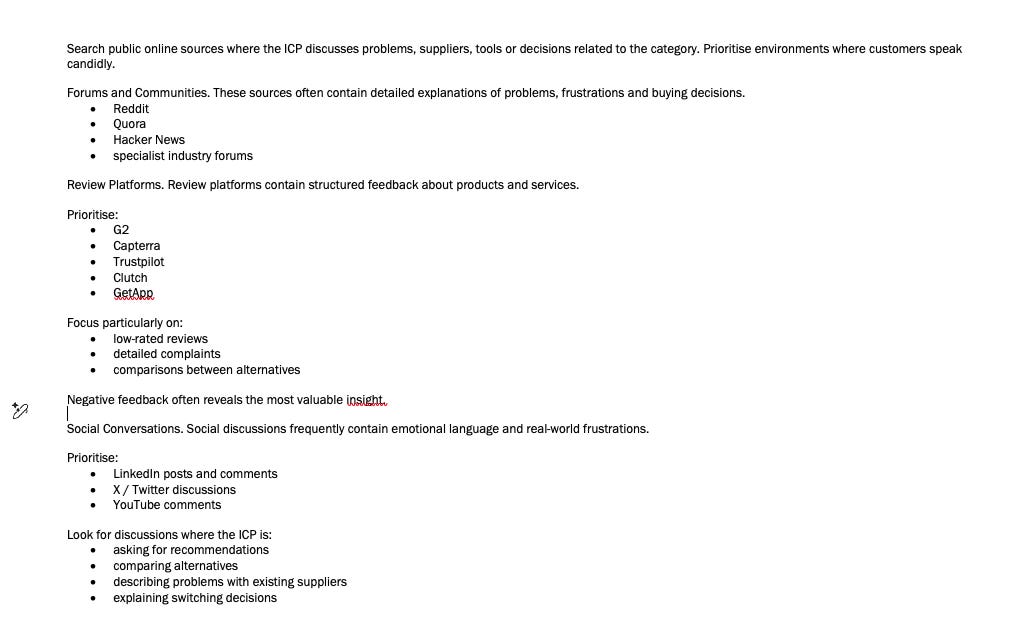

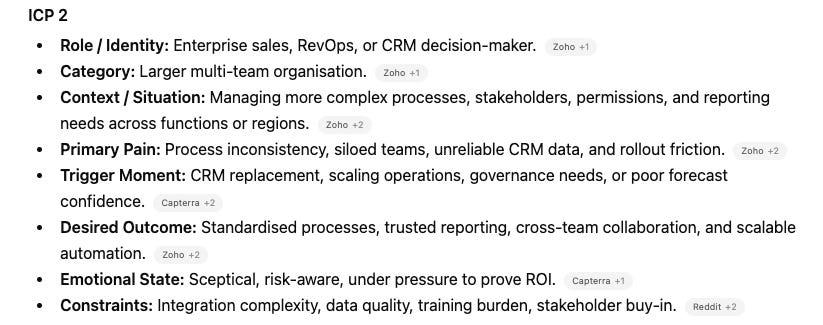

What people are really asking about Zoho CRM’s category.

Synthetic Research, Done Properly

From that foundation, you can build synthetic ICP characters trained on what real people are saying. You can use those characters to interrogate messaging alignment, test pricing assumptions, assess product market fit, and review how a brand is positioned against the needs it claims to solve.

The ICP profile created by HatGPT to interrogate.

There are tools being built specifically for this.

Evidenza, founded by the people behind the LinkedIn B2B Institute, is set up as a synthetic way of doing brand tracking surveys and category buyer surveys. It’s a serious attempt to solve a real problem, and the underlying thinking is sound.

For me though, the limitation is inherent to how LLMs work.

They run to the middle. They pull out the average, the most likely, the statistically representative response.

For quantitative brand tracking, that might be directionally useful, and of course it’s a fraction of the cost of a proper panel. But if you want the scruffy edges, the outlier behaviour, the thing that doesn’t fit the pattern and turns out to be your most interesting insight, synthetic research won’t reliably give you that.

That said, the margins are closing. The speed is incomparable, and the cost is minimal.

Depending on budget and timeline, there’s a strong case for using synthetic research as a first pass, or as a directional check before committing to full research spend.

But I use it for qual.

I use it to ask questions, review messaging, test pricing structures, and assess product market fit. For quant, with statistical confidence at the edges, you still need the real thing.

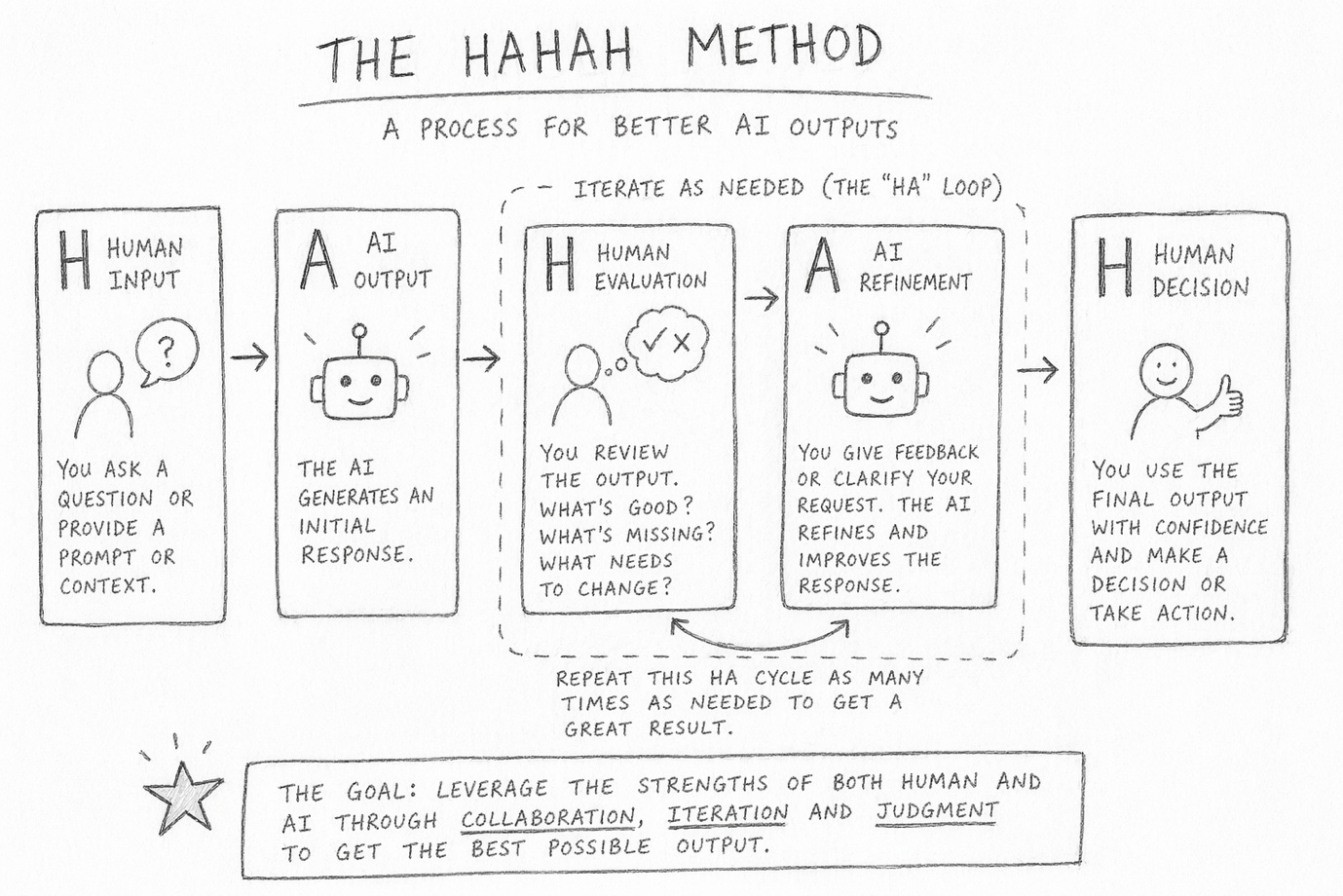

The HAHAH Model

The other thing that changed how I work with AI was learning about what John Bennett calls the HAHAH model in his book Don’t Surrender Your Thinking. It sounds like something a motivational speaker would put on a slide, and John is certainly a motivator, but the underlying logic is right.

Human input. AI output. Human review. AI revision. Human Acceptance.

My first input is always to tell it in as much detail as possible what I want to achieve and why, and ask it to tell me how it would go about creating an instruction block for a custom GPT, what assumptions it would make, what priorities it would work to, what external data or knowledge it needs and to ask me any questions which would help improve the process.

That way, you are not only getting the best out of the AI, you are getting it to tell you how it intends to work. Which means you know in advance the areas where you need to top-load correct thinking.

The Human review and AI revision part iterates as many times as you need until the output is genuinely acceptable.

It’s collaboration, not instruction. You’re not typing a prompt and publishing whatever comes back. You’re running a feedback loop, correcting the model’s assumptions each time, pushing it closer to what you actually want.

The HAHAH method, as created by AI. And yes, it took several iterations to be not shit.

HatGPT went through that iteration process many times when I first built it, and it still does.

The instructions get refined. The knowledge base gets updated. The output gets better. That’s not a bug in how AI works, it’s the feature. If you treat it as a one-shot tool, you’ll get one-shot results.

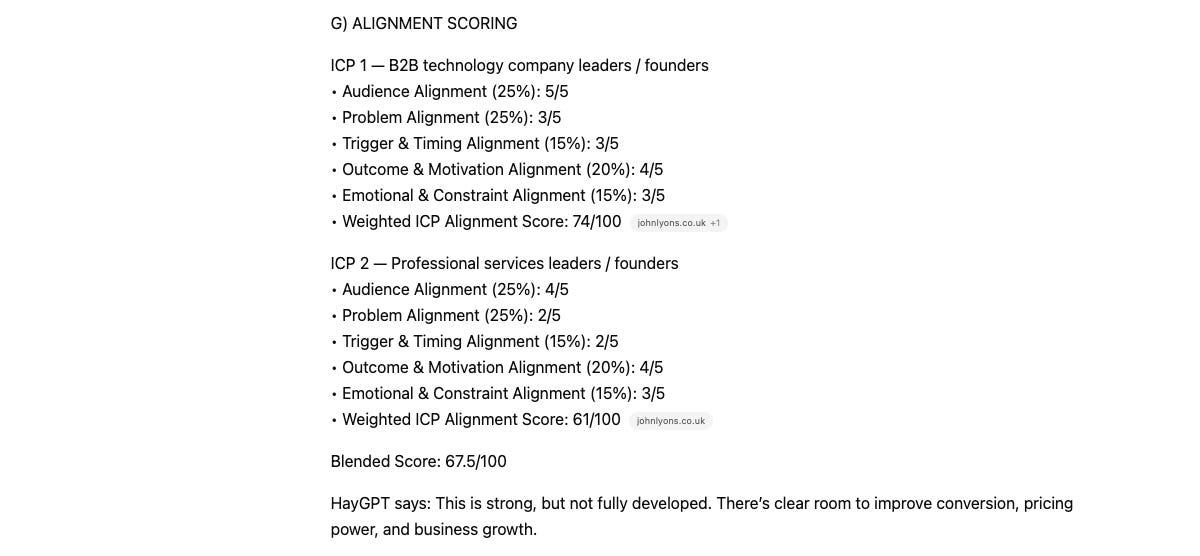

What You Actually Get At The End

HatGPT takes a URL. It summarises what the business does, identifies the apparent ICP from the site, trains itself on what that ICP’s needs actually are based on real forum and community data, builds one or two synthetic characters, and interrogates them to assess product market fit. Then it produces a score, with a clear steer on where the gaps are.

HatGPT ready to be put to work.

The reason product market fit is the output, rather than a content calendar or a brand audit, is deliberate.

It’s a concept that founders and tech businesses connect with immediately. They know their product. They’re usually far less clear on whether the market can actually see their problems being solved. The answer, most of the time, is that it can’t. Not clearly enough, anyway.

That gap is what good marketing closes. Not with more content. With clearer thinking about what the market needs and whether the brand is positioned to meet it.

The TL,DR Version

AI is incredibly useful when you treat it as a junior researcher who needs a proper brief, a clear set of principles to work from, and a trained eye over the output before anything goes anywhere near a client or a channel.

It’s commercially useless when you treat it as a content vending machine. Which, unfortunately, is how most people are using it right now.

Give it better instructions. Point it at better sources. Use it for research, not production. Iterate properly. Then decide what to do with what it finds.

That’s it. The rest is just prompting.

If you want to take HatGPT for a run, you can find it at www.hatgpt.co.uk